Models

On this page you can find a description and source code of

computational models of reading and word recognition, the hippocampus,

the broader medial temporal lobe and memory systems, the eye

movement system, and of strategy analysis

for the Weather task (models are, in a sense, data analysis tools

too).

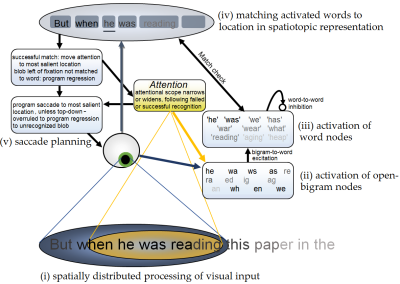

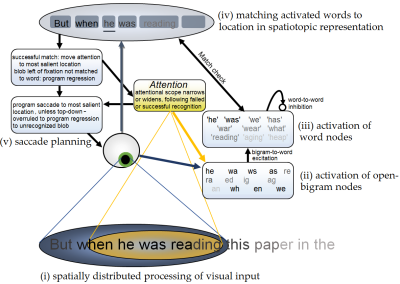

The OB1 model of reading and word recognition

The study of reading suffers a bit from Balkanization, with every step from letter recognition to text comprehension studied in a different subfield. To reverse

this Snell, van Leipsig, Grainger and Meeter (2018)

presented OB1 Reader, the first compuational model of reading that integrates the first step

in reading (recognizing single words) with the second step, moving one's eyes through text and building up sentence representations.

Planned extensions are to address reading aloud, to understand how children learn to read and build up word representations, and have the model develop a representation of text meaning.

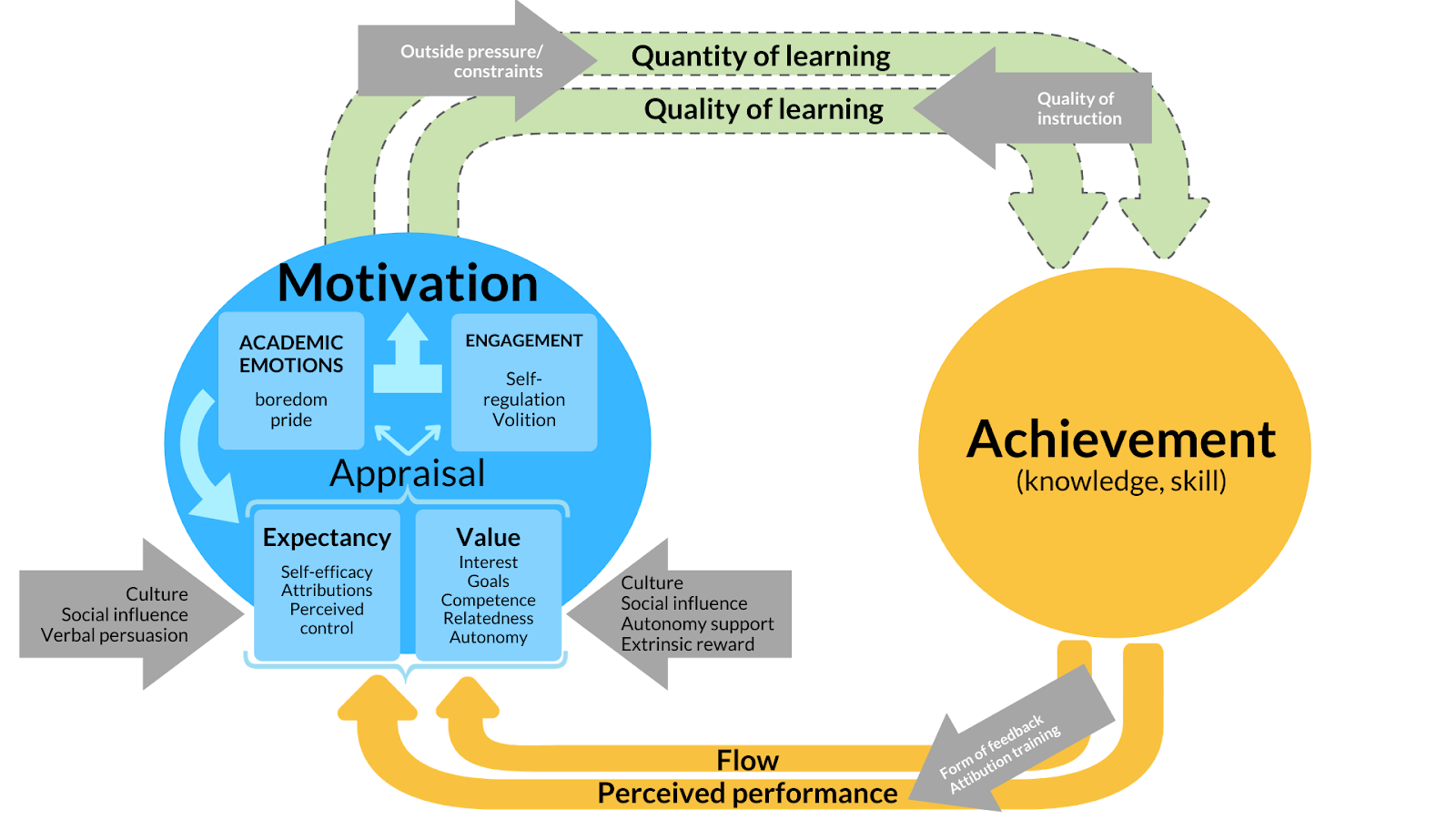

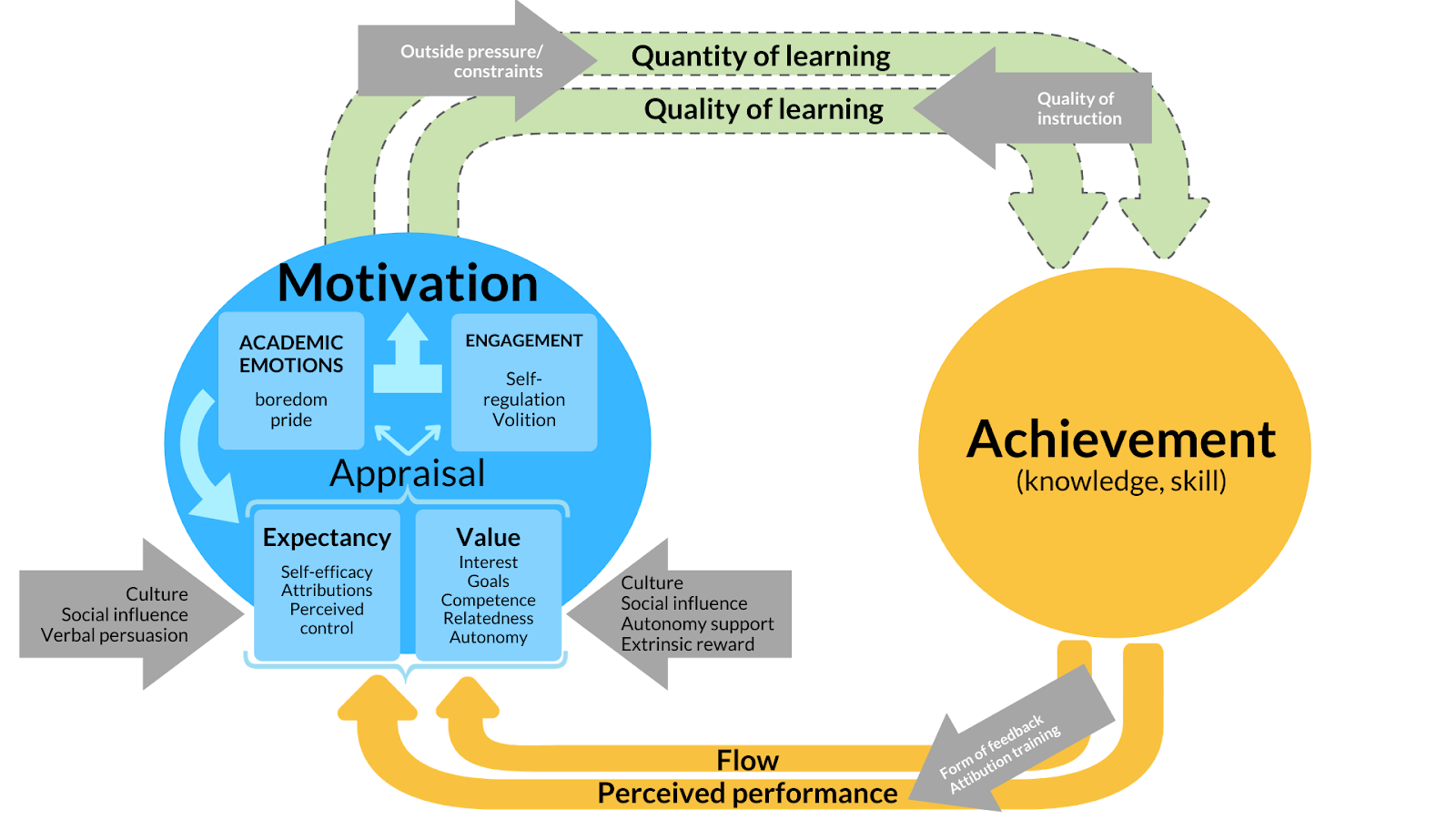

Summary Model of Motivation

Students who are well-motivated tend to achieve more in education than students who are not.

However, that truism is only where it starts to get interesting. There is a plethora of

theories of motivation

that purport to explain how students become motivated. Although the

theories all use their own vocabulary, there are obvious similarities in how

they describe the mechanisms of motivation. With many collaborators, I therefore

made a summary model that describes how motivation and achievement interact, and what

factors support motivation. We also describe all the things that we do not yet know

about motivation-achievement interactions, which is quite a list. We elaborate on a

research agenda that may fill the blanks in our understanding of motivation and achievement.

- Blog

that explains what should count as a theory of academic motivation, and

resulting list.

- Paper

that describes summary model and research agenda.

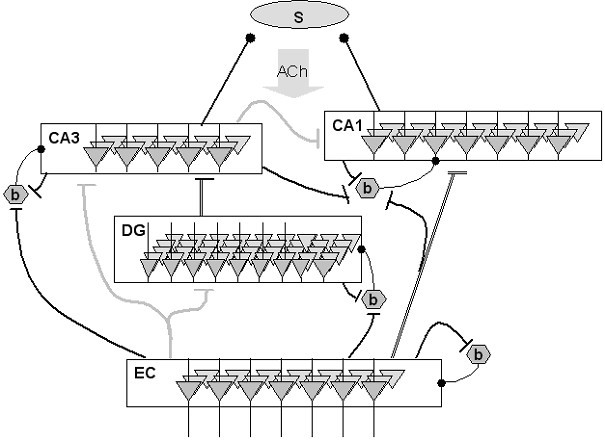

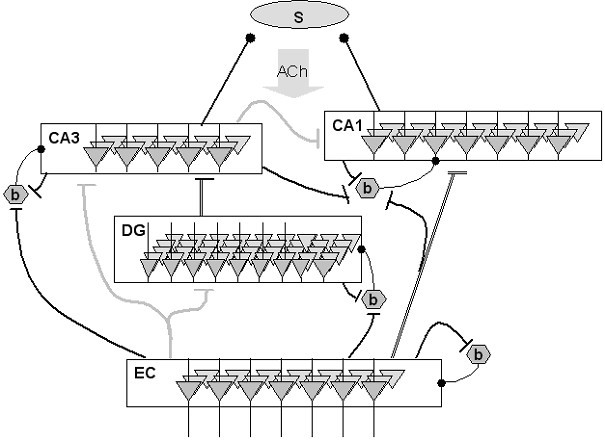

Hippocampal physiology

Meeter

et al. (2004) proposed a detailed model of the hippocampus

proper that focused on the dynamics of acetylcholine. It was

suggested previously by Hasselmo

and colleagues that there are two models in hippocampal

functioning: a storage mode in which information is stored without

interference from retrieved old knowledge, and a retrieval model

in which old memories are activated but new ones not laid down.

Shifting between these two modes might be under the control of

acetylcholine levels, as set by an autoregulatory

hippocampo-septo-hippocampal loop. The model investigated how such

a mechanism might operate, taking into account the major

hippocampal subdivisions, oscillatory population dynamics and the

time scale on which acetylcholine exerts its effects in the

hippocampus.

The model assumes that hippocampal mode shifting is regulated by a

novelty signal generated in the hippocampus. The simulations

suggest that this signal originates in the dentate gyrus. Novel

patterns presented to this structure lead to brief periods of

depressed firing in the hippocampal circuitry. During these

periods an inhibitory influence of the hippocampus on the septum

is lifted, leading to increased firing of cholinergic neurons. The

resulting increase in acetylcholine release in the hippocampus

causes network dynamics that favor learning over retrieval.

Resumption of activity in the hippocampus leads to the

reinstatement of inhibition.

In a later paper, Meeter

et al. (2006) adressed the effects of serotonin on memory

and the hippocampus within the same model.

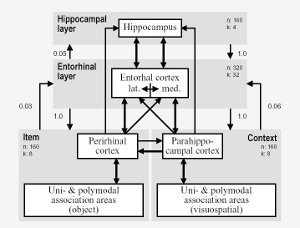

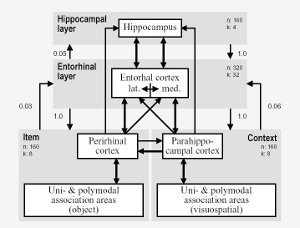

Medial temporal lobe and schizophrenia

In schizophrenia, the largest cognitive impairment is usually

found in episodic memory. The cause of these deficits is unclear.

They do not seem to be related to so-called positive symptoms

(e.g., delusions), but they also do not correlate well with

negative symptoms. Talamini

et al. (2004) proposed that episodic memory impairments in

schizophrenia originate from reduced parahippocampal

connectivity. They developed a abstract medial temporal lobe model

that simulates normal performance on a variety of episodic memory

tasks. The effects of reducing parahippocampal connectivity in the

model (from perirhinal and parahippocampal cortex to entorhinal

cortex and from entorhinal cortex to hippocampus) were evaluated

and compared with findings in schizophrenia patients. Alternative

in silico neuropathologies, increased noise and loss of

hippocampal neurons, were also evaluated. In the model,

parahippocampal processing subserves integration of different

cortical inputs to the hippocampus and feature extraction during

recall. Reduced connectivity in this area resulted in a pattern of

deficits that closely mimicked the impairments in schizophrenia,

including a mild recognition impairment and a more severe

impairment in free recall. Furthermore, the "schizophrenic model"

was not differentially sensitive to interference, also consistent

with behavioral data. Neither increased noise levels, nor a

reduction of hippocampal nodes in the model, reproduced this

characteristic memory profile. Talamini

and Meeter (2009) followed up on the orignial paper by

showing that it can also explain deficits in context processing,

and later corroborated a prediction made by the model in patients

with first-episode schizophrenia (Talamini

et al, 2010).

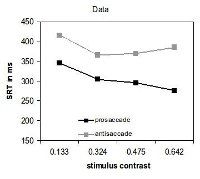

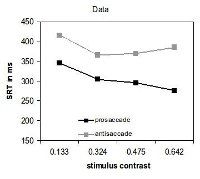

The eye movement system

Meeter

et al. (2010) presented a model of the eye movement system in

which the programming of an eye movement is the result of the

competitive integration of information in the superior colliculi.

This brain area receives input from occipital cortex, the frontal

eye fields, and the dorsolateral prefrontal cortex, on the basis

of which it computes the location of the next saccadic target. Two

critical assumptions in the model are that cortical inputs are not

only excitatory, but can also inhibit saccades to specific

locations, and that the superior colliculi continue to influence

the trajectory of a saccade while it is being executed. With these

assumptions, we accounted for many neurophysiological and

behavioral findings from eye movement research. Interactions

within the saccade map are shown to account for effects of

distractors on saccadic reaction time and saccade trajectory,

including the global effect and oculomotor capture. In addition,

the model accounts for express saccades, the gap effect, saccadic

reaction times for antisaccades, and recorded responses from

neurons in the superior colliculi and frontal eye fields in these

tasks. Later, we showed how this model can account for saccades

that deviate away from distractors, which could until then only be

explained through implausible assumptions about inhibition (Kruijne et

al., 2014).

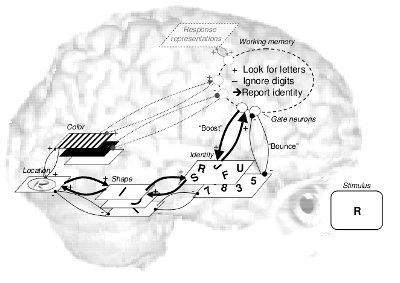

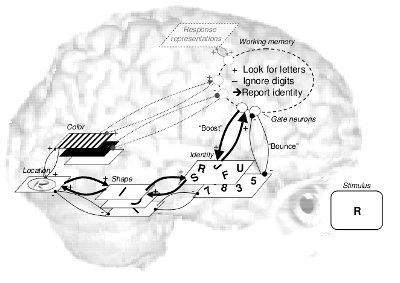

Entry into working memory and the attentional

blink

What is the time course of visual attention? Attentional blink

studies have found that the second of two targets is often missed

when presented within about 500 ms from the first target,

resulting in theories about relatively long-lasting capacity

limitations or bottlenecks. Earlier studies, however, have

reported quite the opposite finding: Attention is transiently

enhanced, rather than reduced, for several hundreds of

milliseconds after a relevant event. Olivers

and Meeter (2008) present a general theory as well as a working

computational model which integrate these findings. There is no

central role for capacity limitations or bottlenecks. Central is a

rapidly responding gating system (or attentional filter) that

seeks to enhance relevant and suppress irrelevant information.

When items sufficiently match the target description, they elicit

transient excitatory feedback activity (a "boost" function), meant

to provide access to working memory. However, in the attentional

blink task, the distractor after the target is accidentally

boosted, resulting in subsequent strong inhibitory feedback

response (a "bounce"), which in effect closes the gate to working

memory. The theory explains many findings that are problematic for

limited-capacity accounts, including a new experiment showing that

the attentional blink can be postponed.

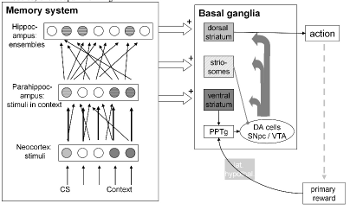

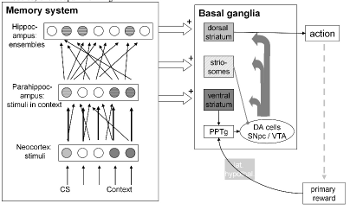

Multiple memory stores and classical

conditioning

Why does the brain contain more than one memory system? What's

the relation between all these systems? Meeter

et al. (2004) do not give much of a rationale, but their

computational model of memory-oriented brain regions contains some

organizing principles. They dinstinguish between a hierarchy of

episodic memory systems, in which the main difference between

stores is the level of integration of representations: from single

stimuli in the cortex to integrated represations of the whole

situation in the hippocampus. These stores are inputs to a number

of output regions that themselves store associations between

imputs and behavioral responses, such as with fear responses in

the amygdala. In Meeter et al. (2004), findings from classical

conditioning are analyzed within the model. It describes how a

familiarity signal may arise from parahippocampal cortices, giving

a novel explanation for the finding that the neural response to a

stimulus in these regions decreases with increasing stimulus

familiarity. Recollection is ascribed to the hippocampus proper.

It is shown how the properties of episodic representations in the

neocortex, parahippocampal gyrus and hippocampus proper may

explain phenomena in classical conditioning. The model reproduces

the effects of hippocampal, septal, and broad hippocampal region

lesions on contextual modulation of classical conditioning,

blocking, learned irrelevance, and latent inhibition.

In a later paper (Meeter

et al, 2009), genetic algorithms to investigate the

complexity of memory. Model animals were constructed containing a

dorsal striatal layer that controlled actions, and a ventral

striatal layer that controlled a dopaminergic learning signal.

Both layers could gain access to the Meeter et al. (2004) memory

hierarchy, but such access was penalized as energy expenditure.

Model animals were then selected on their fitness in simulated

operant conditioning tasks. Results suggest that having access to

multiple memory stores and their representations is important in

learning to regulate dopamine release, as well as in contextual

discrimination. For simple operant conditioning, as well as

stimulus discrimination, hippocampal compound representations

turned out to suffice, a counterintuitive result given findings

that hippocampal lesions tend not to affect performance in such

tasks. However, there is in fact evidence to support a role for

compound representations and the hippocampus in even the simplest

conditioning tasks.

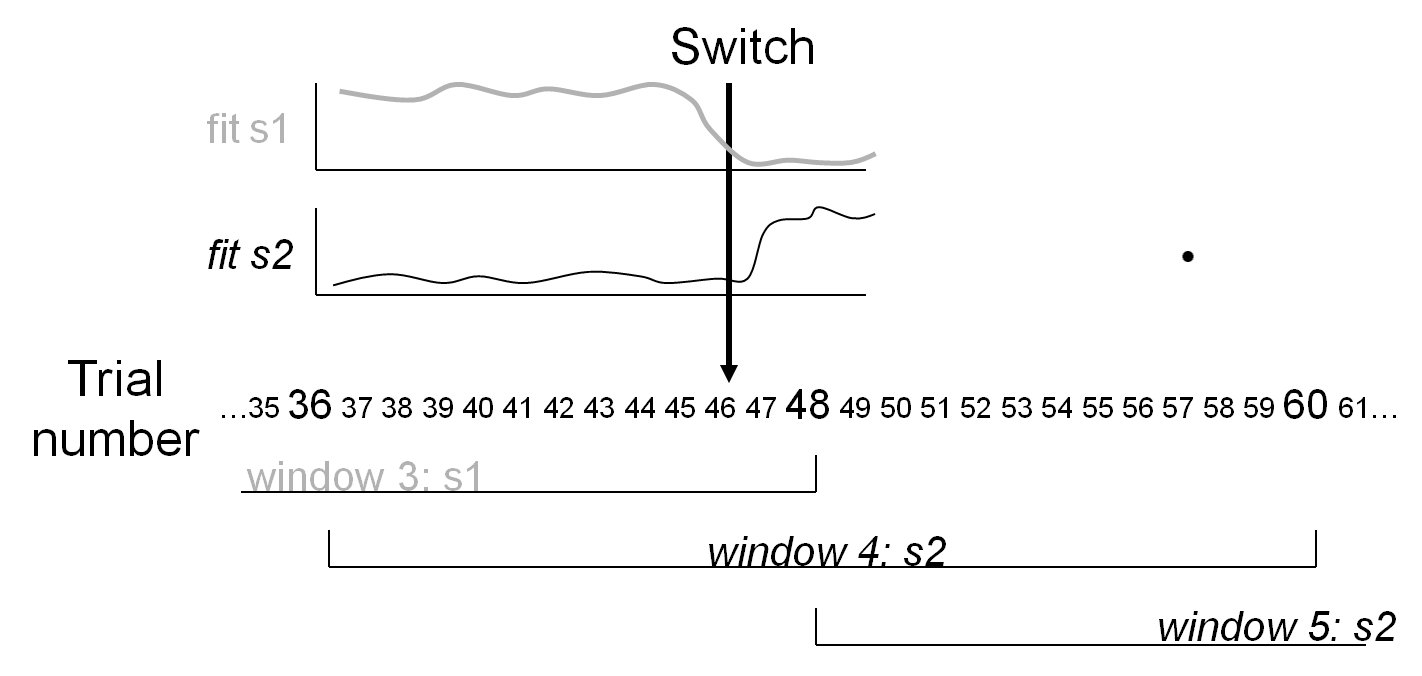

Strategy analysis for Weather

prediction & other multicue learning tasks

The "weather prediction" task is

a widely used task for investigating probabilistic category

learning, in which various cues are probabilistically (but not

perfectly) predictive of class membership. This means that a given

combination of cues sometimes belongs to one class and sometimes

to another. Prior studies showed that subjects can improve their

performance with training, and that there is considerable

individual variation in the strategies subjects use to approach

this task. Strategy analysis of probabilistic categorization

attempts to identify the strategy followed by a participant. Monte

Carlo simulations show that the analysis can indeed reliably

identify such a strategy if it is used, and can identify switches

from one strategy to another (Meeter

et al, 2006). Analysis of data from normal young adults

shows that the fitted strategy can predict subsequent responses.

Moreover, learning is shown to be highly nonlinear in

probabilistic categorization. Analysis of performance of patients

with dense memory impairments due to hippocampal damage shows that

although these patients can change strategies, they are as likely

to fall back to an inferior strategy as to move to more optimal

ones (Meeter

et al, 2008).

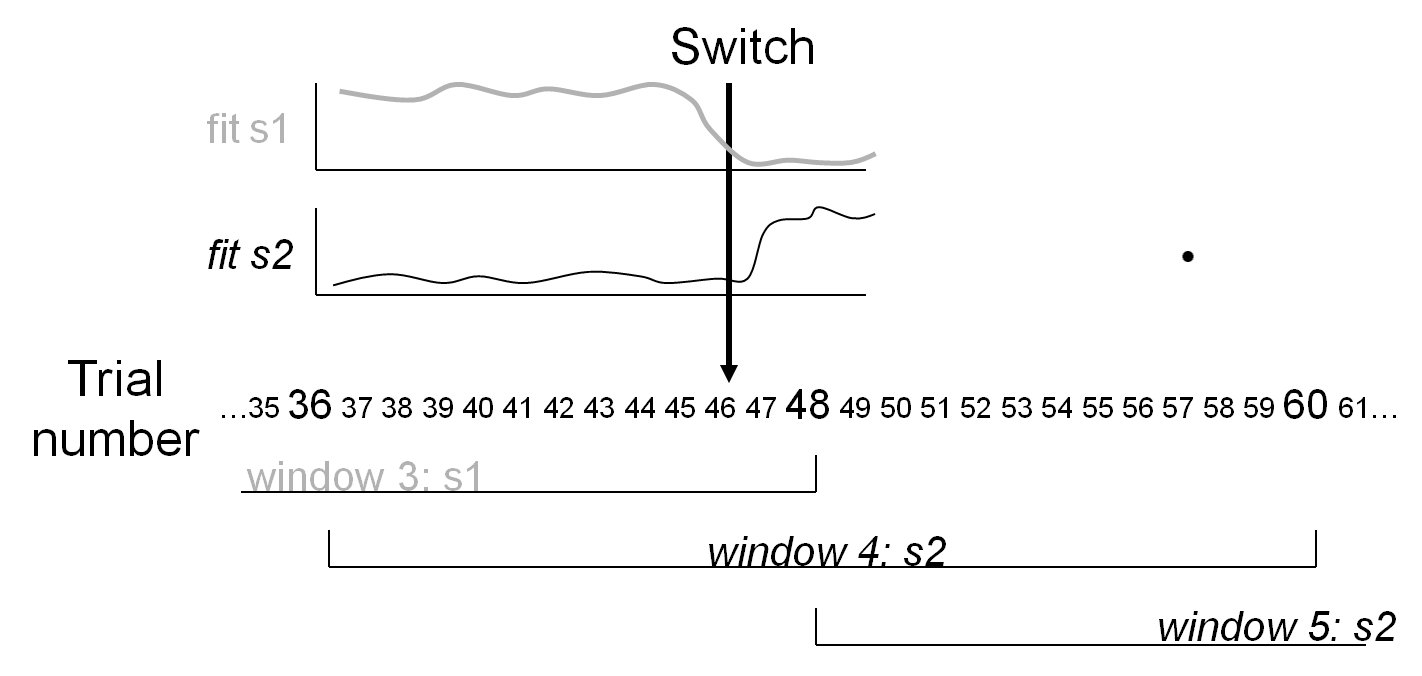

The "weather prediction" task is

a widely used task for investigating probabilistic category

learning, in which various cues are probabilistically (but not

perfectly) predictive of class membership. This means that a given

combination of cues sometimes belongs to one class and sometimes

to another. Prior studies showed that subjects can improve their

performance with training, and that there is considerable

individual variation in the strategies subjects use to approach

this task. Strategy analysis of probabilistic categorization

attempts to identify the strategy followed by a participant. Monte

Carlo simulations show that the analysis can indeed reliably

identify such a strategy if it is used, and can identify switches

from one strategy to another (Meeter

et al, 2006). Analysis of data from normal young adults

shows that the fitted strategy can predict subsequent responses.

Moreover, learning is shown to be highly nonlinear in

probabilistic categorization. Analysis of performance of patients

with dense memory impairments due to hippocampal damage shows that

although these patients can change strategies, they are as likely

to fall back to an inferior strategy as to move to more optimal

ones (Meeter

et al, 2008).

Strategy analysis can be done in excel with the file below.

Matlab code is also available upon request.

The "weather prediction" task is

a widely used task for investigating probabilistic category

learning, in which various cues are probabilistically (but not

perfectly) predictive of class membership. This means that a given

combination of cues sometimes belongs to one class and sometimes

to another. Prior studies showed that subjects can improve their

performance with training, and that there is considerable

individual variation in the strategies subjects use to approach

this task. Strategy analysis of probabilistic categorization

attempts to identify the strategy followed by a participant. Monte

Carlo simulations show that the analysis can indeed reliably

identify such a strategy if it is used, and can identify switches

from one strategy to another (Meeter

et al, 2006). Analysis of data from normal young adults

shows that the fitted strategy can predict subsequent responses.

Moreover, learning is shown to be highly nonlinear in

probabilistic categorization. Analysis of performance of patients

with dense memory impairments due to hippocampal damage shows that

although these patients can change strategies, they are as likely

to fall back to an inferior strategy as to move to more optimal

ones (Meeter

et al, 2008).

The "weather prediction" task is

a widely used task for investigating probabilistic category

learning, in which various cues are probabilistically (but not

perfectly) predictive of class membership. This means that a given

combination of cues sometimes belongs to one class and sometimes

to another. Prior studies showed that subjects can improve their

performance with training, and that there is considerable

individual variation in the strategies subjects use to approach

this task. Strategy analysis of probabilistic categorization

attempts to identify the strategy followed by a participant. Monte

Carlo simulations show that the analysis can indeed reliably

identify such a strategy if it is used, and can identify switches

from one strategy to another (Meeter

et al, 2006). Analysis of data from normal young adults

shows that the fitted strategy can predict subsequent responses.

Moreover, learning is shown to be highly nonlinear in

probabilistic categorization. Analysis of performance of patients

with dense memory impairments due to hippocampal damage shows that

although these patients can change strategies, they are as likely

to fall back to an inferior strategy as to move to more optimal

ones (Meeter

et al, 2008).